The United States of America is not collectivist by nature. We celebrate a lot of individualism in this country, but rarely through the framing of personal responsibility to the public good. More often, we celebrate a liberalism that is framed through toxic masculinity, colloquially known as “rugged individualism.” This type of freedom is less concerned with the social contract (a system of bounded generalized reciprocity, one which focuses on what we can do for each other in terms of both freedom and support, and what we owe to each other in each of those respects) and instead focuses on how society should not infringe on our personal liberties. In our culture, it is easier to celebrate the self-made person than it is to ask that person to care about somebody who has achieved less or needs more. Over our nation’s history, we have moved away from “Join, or Die” exclusively towards “give me liberty or give me death.” there have certainly been times in our country where we see clearly the need for group coordination and collective action. However, over the last 50 years, we have had a cultural shift. Now, when people say don’t tread on me, they often mean our own democratically elected leaders and their neighbors and fellow citizens. Where we were once united to face shared challenges and common opportunities, to manage external threats and promote everyone’s ability to pursue happiness, there’s instead been a mentality that pits feeling a sense of independence against meaningful contribution to the system which fosters it. The saying used to be “no taxation without representation,” but now any form of taxation from our representatives is seen as intrinsically oppressive because we are taking money from individuals and reallocating it to social programs.

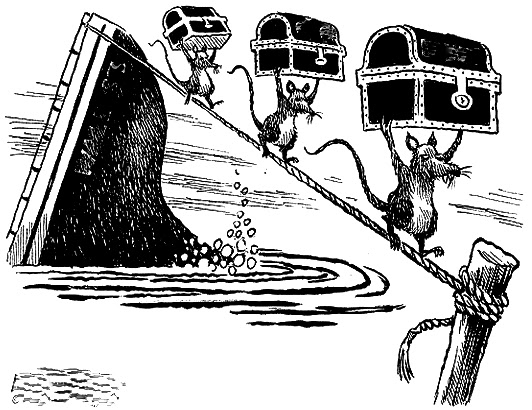

It has been demonstrated in our society that we care more about individual success then collective good. We measure our State of the Union by GDP and stock market prices, rather than the sustainability of minimum wage or the general quality of life for an average citizen. We care more about people on welfare testing positive for drugs, than hedge fund managers embezzling money via foreign banks. We entertain the rights of the lone baker, who insists that granting civil liberties to marginalized groups will infringe on their individual right to self-expression. Though it was not by majority, we elected a president who brags about not paying taxes. Instead of corporations being responsible to the public, states are forced to compete with each other to court companies like Amazon and Apple to establish workplaces in their states, often with large contributions and tax breaks, and only to see them outsource most positions abroad and still pay no taxes at the end of each year. This might all be fair enough, if not precisely well and good, if individual achievement was directly correlated with collective good, Even at some small degree. It’s just not true that a rising tide lifts all ships, especially when that tide is being generated in somebody’s private tide pool. So, not only is individualism a poor substitute for communities and welfare, it is also not something to which each American citizen has an equal opportunity. There are many fallacies to the American dream, not least of which is the presumptive implication that we have all been prepared and equipped to run in the same race and achieve similar standing. (Embedded even more deeply than that is the fallacious assumption that achievement is equivalent to the pursuit of happiness… but that’s an issue for a different rant.) To bring things out of the abstract and into contemporary conversation, we already know that this pandemic will impact some people More than others, no matter what. There is no sense of achievement or moral deservingness that will save somebody from contracting the disease or suffering its worst consequences. In other words, our typical sense of judgment is lost on this context. At first, people wanted to ascribe susceptibility to this disease to all sorts of issues like age and personal health, and then even race and national origin; we quickly exhausted all possible categories of marginalized populations to blame, before finally realizing that the issue can potentially affect everybody equally–The only difference being its increased visibility in those populations due to their prior marginalization.

The same thing is true about our economy. we were born into this world with different levels of immunity, with better systems of support in our local communities, and with a spectrum of possible outcomes that is determined by status, achievement, and often luck. In the abstract, these societal issues are instances of the same collective action problem. And, in practice, the two are connected in ways where our economic policies will continue to inhibit our healthcare recovery process. How we have handled our economic system across my lifetime leaves me doubtful that we have a culture sensitive enough to support each other through this pandemic, much less knowledgeable enough about its contractualist origins to make good on what democracy was built to do–and, more specifically, that we will be able to shift our cultural norms towards what this article suggests. If our salvation rests in people being praised for inaction, on the sense of personal responsibility from each individual to contribute to our collective good, then I believe we will be facing the worst possible outcomes in relation to this pandemic.

Without an overall sense of cooperation, everybody will pivot to themselves, their families, their communities, and their industries in that specific order. Instead of yielding some sense of independence to our representatives whom we literally pay to manage issues of collective action, we will be protecting our children at the expense of your grandparents. We will have states trying to outbid each other for the same medical supplies. And, you will have a cultural norm that still hesitates to praise self quarantining, much less insist upon it and sanction those who refuse. It’s natural to share your two boxes of masks with just a close group of friends; human beings were built to be tribal. It’s natural to yearn for church meetings to return to their more intimate, in-person norms; human beings were built to be social, and we literally need physical touch and interpersonal networks. It’s natural for states to compete with each other in a zero-sum game with no federal coordination; No Governor wants to face reelection without trying their best to serve constituents, and no voter wants any less from their governor. Lastly, it’s normal to want your cup of Starbucks and a regular haircut; a sense of safety and normalcy is not just desirable, it’s literally part of ones health and well-being, and the sense of “cabin fever” you might be feeling is a critical psychological symptom rather than a selfish urge to be chastised. I am not here to tell anybody that their desire to reopen the economy or to return to normal social practices is a reprehensible thing by itself. It makes sense but it doesn’t add up, so to speak.

Things won’t get better for you as an individual unless we can fix things as a society. You won’t be at less risk until we control the spread for everybody, and you won’t have all of your opportunities unless we fix the economy for everybody. Depending on your age or privilege, you may have lived a lifetime without needing to think about the welfare of other people ahead of your own agenda. Welcome to society. Please wipe your feet and remove your shoes, dust off the social contract that is well worn across human civilization, and start looking at your neighbor as part of the life raft that we all build together and not just an anchor.

https://www.cnn.com/2020/05/03/health/coronavirus-vaccine-never-developed-intl/index.html